|

Tube Amp Biasing, and how not to get killed doing it.

The Fender Blues Deluxe only has one bias adjustment pot because it is cathode biased. This is a self biasing amp that has a circuit which brings the tubes into parity. The adjustment pot varies current/voltage to both tubes. Biasing is important because power tubes may draw different currents due to imperfections in manufacturing. Amps with two adjustment pots to compensate for the differences in the tubes are usually not cathode biased, but can be. If you look at the blurry picture(sorry)of a circuit board below, notice the two, blue adjustment pots, between the white resistors, and in back of the orange capacitors. These are the adjustments on my line 6, and are typical of what you'll find on a tube amp with separate adjustments. Personally I prefer having a bias adjustment for each tube so that I can experiment with different brand power tubes in the same amp. If you have one bias adjustment, and need to replace tubes, buy a matched pair. This means that they have been tested and draw similar power. Thanks for reading JB.

I currently own two guitar tube amps, and tube amp biasing, or checking bias, is just part of owning a tube amp. Before I get into the biasing stuff I'd like to point out one important thing, YOU CAN DIE DOING THIS. I'm not one for the dramatic, but messing around with DC voltages in the 400V range is potentially deadly. Do yourself a favor, and follow the one hand rule, only one hand in the amp at one time.

Tube amp biasing is no new phenomenon, tubes have been around for a long time. Essentially what tube bias means is that you want to make sure each tube is doing it's fair share of the work, and that both tubes are not doing too much work.(There are amps with two power tubes, four, and more. The concept remains the same.) Tubes, unlike their solid state counterparts, transistors, are imperfect because they are assembled by humans. Probably twelve year old Russian, or Chinese humans, well, lets hope not. The problem is that no two tubes are exactly alike. Now you can buy a matched set of tubes, but match means "close match". So we must bias the tubes so they'll last, and perform as we'd like. Look at tube biasing like this. If you have a team of mules, one strong mule, and one weak mule, the strong mule is going to get tired of making up for the weak mule and die. Similarly if we overwork the two mules by running them too hot, they'll both die. Is this making any sense? The point is that like a team of mules, each tube must be doing around the same amount of work. And no two tubes should be driven by a higher amount of current than recommended.

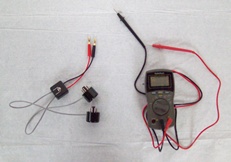

Take a look at the picture above with the meter. Next to the meter is a tube bias tester, get one of these, it may save your life. This allows you to plug the tubes into the tester, then plug the tester into the amp's tube slots. There is a switch box that has a one ohm shunt resistor, this allows you to measure the tube amperage in milli-volts, and switch between the tubes for bias testing. The picture to my right shows my finger pointing to a trim pot. This is what you turn when adjusting the bias voltage. Being the first time I checked for bias on this Fender Blues Deluxe, I found this unusual. There are typically two trim pots, like on my line 6, so that you can bias the tubes. On this amp you can only raise and lower the voltage on both tubes at the same time. When I find out why I'll post it here, until then remember two pots are the norm, and what you're most likely to find.

Now take a look at the two meter readings above. They are within 1/10 of a milli-volt of one another. This is close to perfect, meaning each tube is drawing almost the identical amount of current. I love the way the amp sounds, so I'm not going to adjust anything. Typically when installing new tubes there will be a few milli-volt difference you must adjust for. For example I put new tubes in my Line 6 amp. The manufacturers voltage(amperage) recommendation is 35 millivolts per tube. Once installed, the tubes read 28mv and 32mv, so I had to adjust the two trim pots so that each tube read 35mv. If your a little confused by reading the tube amp bias in milli-volts, here's what's going on. As I mentioned the tube amp tester comes installed with a one ohm shunt resistor. Ohms law is Voltage=Current*Resistance, V=I*R, so if R is one ohm, then V=I*1, therefore V and I are the same. The tester shown above is around $45.00. You can adjust tube amp bias without a tester if you know where to test from. I thought about it for awhile, and figured I'd rather spend fifty bucks, then DIE. Call me crazy, but if you're going to be messing around with tubes, get the tester. Good luck, JB

Return from Tube Amp Biasing to Electric Guitar Info home |

If you have any questions regarding electric guitar modifications, building, setup, amplifiers, etc. send me an email .....Guitarinfo145@aol.com..... All questions are appreciated, and often lead to new ideas for additional content. I do my best to get back as soon as possible, Thanks, J.B.